Customer feedback doesn’t raise customer satisfaction on its own. It raises satisfaction when it helps you pinpoint what’s breaking the experience, fix it where it happens and follow up in a way customers can actually feel.

Most teams aren’t short on feedback. There’s a CSAT (Customer Satisfaction Score) question after support tickets, an NPS (Net Promoter Score) pulse every quarter, plus reviews coming in from third-party sites. The problem is what you do with the incoming data: comments land in inboxes, patterns stay fuzzy, ownership is unclear and customers keep running into the same friction, so CSAT stays flat.

This guide shows you how to turn feedback into clear decisions, shipped fixes and measurable CSAT lift, without building a heavyweight Voice of the Customer (VoC) programme.

TL;DR – Article summary

Measure satisfaction at the touchpoints that genuinely shape the experience (support resolution, onboarding milestones, checkout, delivery/appointments, renewals/cancellation) and always ask one short “why” question. Translate open text into a small set of recurring drivers (theme + where it happened + root cause). Prioritise consistently (Impact × Frequency × Effort), assign a named owner and set a concrete next step so issues don’t stall. Close the loop with customers so improvements become visible. Then prove what worked by tracking touchpoint-level CSAT before and after each change, alongside one operational metric (drop-off, error rate, resolution time, repeat contact rate) and the volume of feedback tied to that driver.

- What is customer satisfaction and CSAT?

- How do you measure customer satisfaction?

- What customer satisfaction tells you (and what it doesn’t)

- The 7-step feedback loop that lifts customer satisfaction over time

- Why feedback programmes fail to improve customer satisfaction

- Making this scalable with the right tooling

What is customer satisfaction and CSAT?

Customer satisfaction is the outcome you care about: how customers feel after an experience with your brand, product or service. Did you meet expectations? Did it feel easy? Would they choose you again?

CSAT (Customer Satisfaction Score) is one way to measure that outcome. It’s a short question that produces a score, usually asked right after a specific interaction, like a support resolution, checkout, delivery or onboarding milestone.

They’re closely linked, but they’re not the same thing. Customer satisfaction is the experience and perception. CSAT is the metric that helps you track it consistently at key moments.

In the rest of this guide, we’ll treat customer satisfaction as the goal and CSAT as a practical, touchpoint-level measure that helps you spot what’s helping or hurting satisfaction, so you can fix it.

How do you measure customer satisfaction?

There isn’t one perfect “customer satisfaction metric”, because satisfaction changes depending on the moment and channel. Most teams use a mix of:

- CSAT: how satisfied someone felt with a specific interaction (“How satisfied were you with…?”)

- NPS: overall loyalty and likelihood to recommend

- CES: how easy something felt (“How easy was it to…?”)

For this article, CSAT is the best fit because it’s touchpoint-based. It helps you see where satisfaction is being created or destroyed (support, onboarding, checkout, delivery, renewals) and that makes it easier to connect feedback to specific fixes.

The simplest CSAT setup is two questions:

- 1) a rating question (your CSAT score)

- 2) one open question to capture the reason (“What’s the main reason for your score?”)

The score tells you that something happened. The “why” tells you what to change!

What customer satisfaction tells you (and what it doesn’t)

CSAT is a moment-specific signal: did you meet expectations at that point in time?

That’s why touchpoint CSAT is so useful. Someone can love your product but hate delivery. Trust your brand but feel let down by support. Be happy overall but churn because one renewal interaction went badly.

If you only track one overall satisfaction score, you end up with vague actions like “improve service” or “streamline onboarding”. Those phrases hide multiple issues, which makes it hard to decide what to fix first and even harder to prove what moved the needle.

To lift CSAT reliably, you need two things working together:

- feedback captured at the moments that create (or destroy) satisfaction

- a repeatable way to turn that feedback into improvements

The 7-step feedback loop that lifts customer satisfaction over time

Step 1 – Measure satisfaction in context, not in the abstract

A generic “How satisfied are you with us?” gives you a number that’s hard to act on. Even if it drops, you’re left guessing which part of the experience caused it.

Instead, measure satisfaction where you can do something with the result: after support resolution, after checkout, during onboarding milestones, after delivery/appointments and around renewal, upgrade or cancellation.

Keep it simple:

- “How satisfied were you with your experience today?”

- “What’s the main reason for your score?”

The score tells you something happened. The reason tells you what to fix.

If you want examples of what to capture in digital journeys (and where), this overview of website feedback patterns is a good starting point.

Step 2 – Ask while the customer still remembers the details

Feedback loses value fast when it arrives late. Ask a week after an interaction and you’ll get a vague answer (if you get an answer at all).

Speed also changes how people judge your response. Research on complaint handling in social channels found that quicker responses (first response and final resolution) are linked to higher satisfaction with complaint handling. Another study on online managerial responses found that faster, more personalised responses can increase satisfaction because people read that as competence.

The best systems combine two types of feedback:

- Transactional feedback right after an interaction (support, delivery, onboarding milestone)

- In-journey feedback while someone is using your site/app and friction is happening

The goal isn’t “more surveys”. It’s fewer questions, asked at the moments that matter.

In digital journeys, those moments are often visible in behaviour: lingering on pricing, abandoning checkout, looping on an error or bouncing around the help centre.

That’s where short, in-the-moment prompts tend to outperform a generic “tell us what you think” form, because the context is still there.

Step 3 – Turn comments into patterns teams can work with

Most teams get stuck because every comment is treated like a one-off story. You end up with lots of “interesting feedback” and not much you can plan around.

What you need are patterns and you don’t need a complicated tagging system to get there.

A lightweight structure is enough:

- Theme: what the customer is talking about

- Where: where it happened (touchpoint, page, channel, product area)

- Driver: the underlying cause

Example comment

“The checkout is so annoying. It keeps failing.”

Useful output

- Theme: Checkout / payment

- Where: Payment step (mobile)

- Driver: 3D Secure (an extra card verification step) fails and the error message doesn’t explain what to do next

That last line is where action lives, while the first two help you identify trends in friction areas.

A helpful mental model is symptom vs driver. Customers describe symptoms (“confusing”, “slow”, “annoying”). Your job is to identify the driver (“too many steps”, “unclear copy”, “approval takes 48 hours”, “payment method fails on iOS 17”).

If you want a broader framework for collecting + analysing + closing the loop, this pillar-style guide of customer feedback management provides useful context.

Step 4 – Prioritise based on impact, not noise

CSAT often doesn’t move because teams act on the loudest feedback rather than the most important friction.

A simple method that stays consistent across teams is:

Impact × Frequency × Effort

- Impact: if you fix this, how much could CSAT improve at that touchpoint

- Frequency: how often it appears (in feedback and in behaviour)

- Effort: how hard it is to fix (lower effort should rise in priority)

You don’t need perfect scoring. You need a repeatable way to decide what to prioritise next.

To keep it from disappearing into meetings, track each recurring driver with: owner, priority, target date and what you’ll measure after release. That’s what turns “insights” into work.

Step 5 – Assign owners or nothing changes

This is where feedback programmes quietly stall: everyone agrees on the issues, but nobody owns the fix.

The rule is simple: every recurring driver needs a named owner and a next step.

Ownership often looks like this:

- Checkout friction → product/UX

- Delivery questions → operations

- Billing clarity → finance/ops + product

- Support experience → support leadership

Even if the next step is “investigate root cause”, put a name next to it. Otherwise, it becomes “someone should look into this” and it won’t move.

Step 6 – Close the loop with customers, so change becomes visible

Closing the loop means following up after feedback and showing customers their input led somewhere.

It does two things:

- it can rescue the relationship with that individual customer

- it builds trust that makes satisfaction more resilient over time

There are two loops:

- Individual loop: respond to the person and resolve the case

- System loop: fix the driver so fewer customers hit the same issue

Good follow-ups stay simple: acknowledge what happened, explain what you’re doing next, give a timeline or next step and thank them for helping you improve.

And don’t keep improvements private. A short “You said / we did” update in release notes, a newsletter or your help centre makes the change visible and visibility is what turns “we listened” into trust.

Forrester’s 2025 feedback management research highlights that many teams do respond to survey feedback, but often miss opportunities (including underusing one-to-many updates and not responding beyond negative feedback). That’s exactly why a visible “you said / we did” habit matters.

Step 7 – Prove what worked, so the loop keeps running

Feedback only lifts your customer satisfaction when you can link actions to outcomes.

Track impact at touchpoint level:

- CSAT before the change

- what changed (and when)

- CSAT after the change

- volume of feedback mentioning the driver over time

- one operational metric that should move with it (drop-off rate, error rate, resolution time, repeat contact rate)

Example

Problem: “payment failed” comments rise; checkout CSAT drops

Action: improve error messaging, add a payment method, remove a confusing step

Measure: checkout CSAT, payment error rate, payment drop-off, and feedback volume mentioning “payment failed”

This is the difference between a feedback programme that reports and one that improves. If the output is dashboards and nothing changes, customers won’t feel a difference. The loop works when improvements keep getting implemented and you can show the impact.

Why feedback programmes fail to improve customer satisfaction

If you want satisfaction to move, avoid these traps:

- Measuring without diagnosing (CSAT tells you what, not why)

- Treating every comment equally (no prioritisation = scattered effort)

- No ownership (insights don’t become work)

- No follow-up (customers feel ignored)

- Reporting instead of improving (activity without change)

If you recognise one of these, the fix usually isn’t “more feedback”. It’s tightening the link between touchpoint → driver → owner → action → proof.

Making this scalable with the right tooling

At low volume, you can run this with spreadsheets and manual tagging. Once feedback comes in across journeys, channels and teams, manual processes become the bottleneck: context gets lost, routing slows down and it becomes harder to track what actually got fixed.

Tooling is most useful when it protects context and speeds up action, especially when it helps you:

- capture feedback in journeys and after interactions

- combine scores with context (page, device, journey step, segment)

- analyse themes and drivers at scale

- route insights to the teams who can act

- track actions and connect changes to CSAT over time

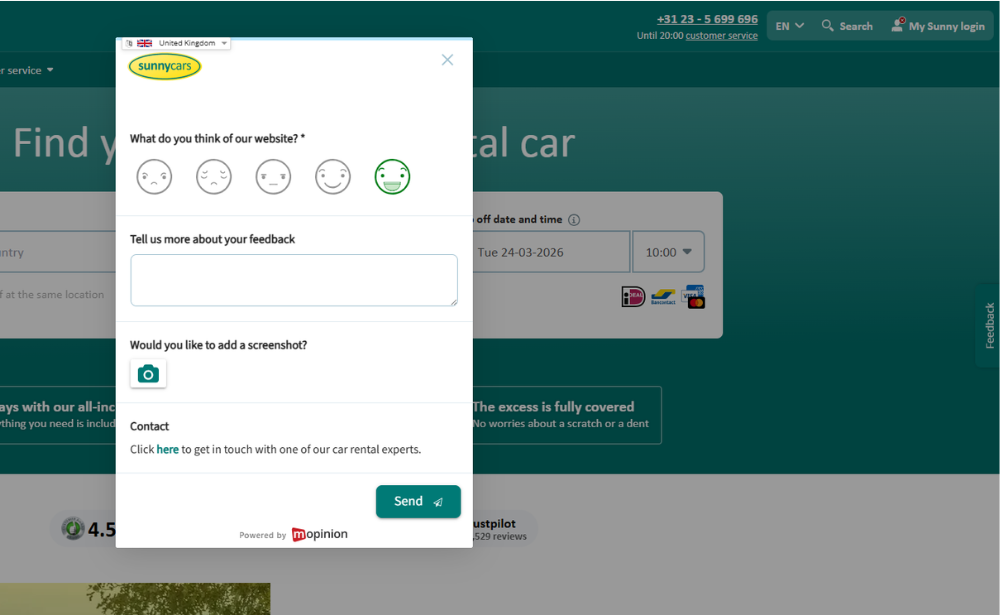

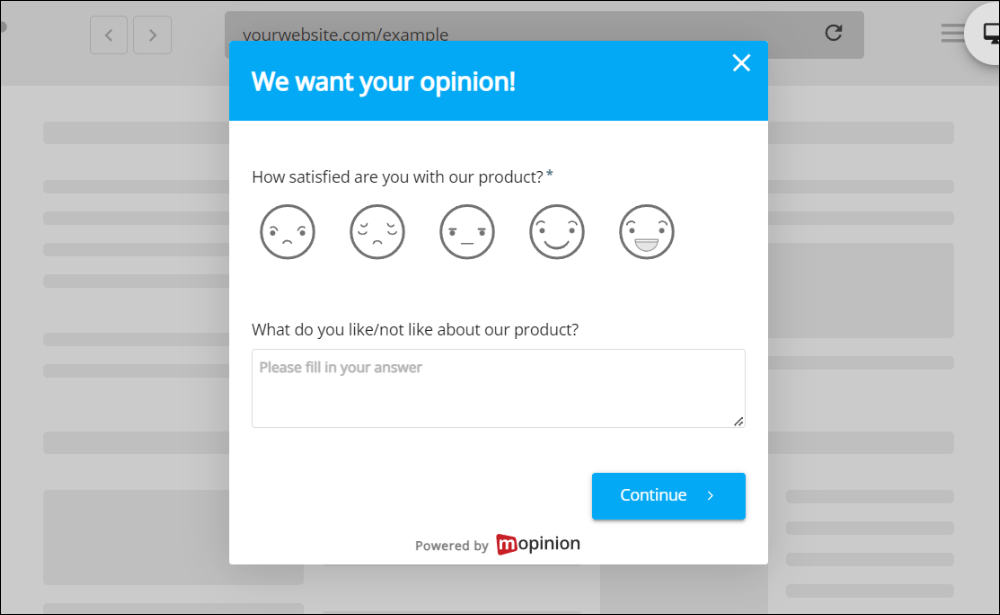

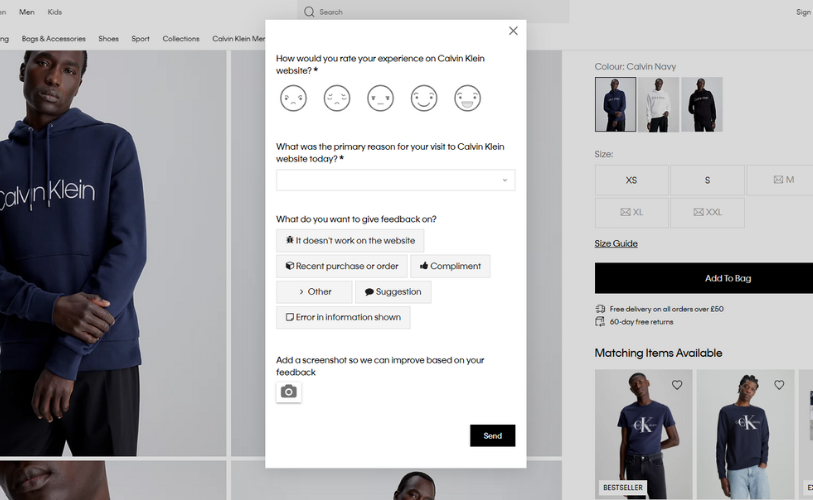

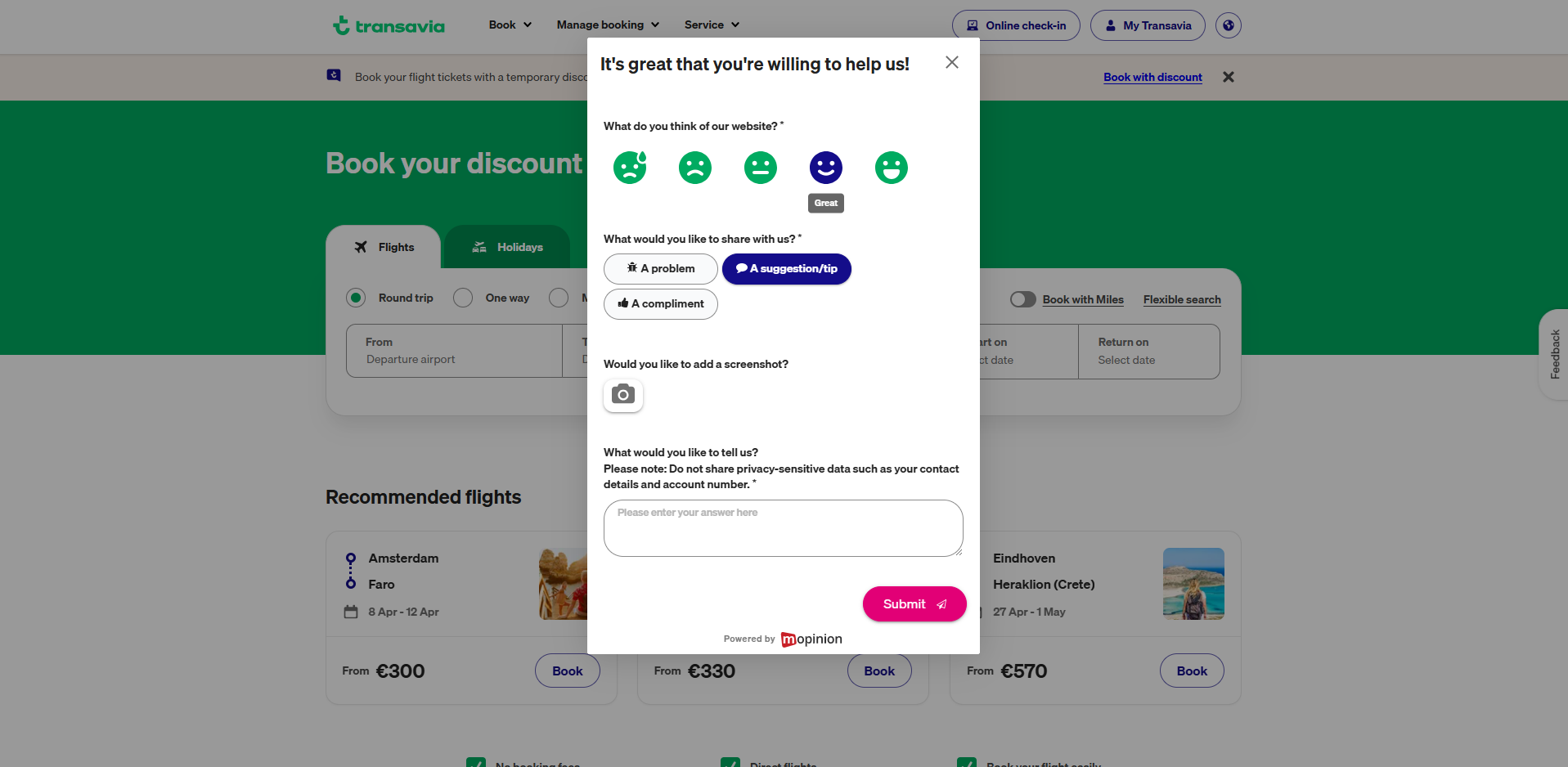

If your focus is improving digital experiences, Mopinion (part of Netigate) supports this loop with in-the-moment website and app feedback, behavioural context and analysis/routing features that help teams move from insight to action faster.

Ready to see Mopinion in action?

Want to learn more about Mopinion’s all-in-1 user feedback platform? Don’t be shy and take our software for a spin! Do you prefer it a bit more personal? Just book a demo. One of our feedback pro’s will guide you through the software and answer any questions you may have.